AI Dystopia

The rapid rise of AI is set to fundamentally reshape society, posing challenges beyond just economic disruption. The immediate threat is widespread job displacement, which risks creating a severe class divide where wealth and power are concentrated with those controlling the algorithms. AI also deeply threatens democracy and human rights through opaque decision-making that can amplify existing biases and lead to systemic discrimination based on factors such as economic and educational status, race, religion, or political beliefs. Furthermore, AI enables pervasive surveillance and the mass generation of sophisticated disinformation, such as deepfakes and AI-curated content used to control and manipulate users on social media platforms. Ultimately, ensuring AI serves humanity requires a commitment to ethical governance, preventing social stratification, preventing the erosion of privacy, and promoting genuine human connection.

AI's Impact on Jobs,

Privacy, and Society

52% of Americans say they feel more concerned than excited about the increased use of artificial intelligence.*

30% of current U.S. jobs could be automated by 2030; 60% will have tasks significantly modified by AI.*

53% of adults in the U.S. say AI will worsen people’s ability to think creatively.*

300 million jobs could be lost to AI globally, representing 9.1% of all jobs worldwide.*

59% of workers will require upskilling or reskilling by 2030.*

Amazon has laid off 57,000+ workers since 2022. The company now has more than one million robots working in its warehouses.*

76% of Americans say it’s extremely or very important to be able to tell if pictures, videos and text were made by AI or people*

60% of Americans say they’d like more control over how AI is used.*

23.5% of U.S. companies have replaced workers with ChatGPT or similar AI tools.*

53%f Americans say AI is doing more to hurt than help people keep their personal information private.*

Half of all white collar, entry-level jobs will be eliminated over the next five years, according to Dario Amodei, founder of Antrhopic, a leading AI company.*

50% of adults in the U.S. say AI will worsen rather than improve people’s ability to form meaningful relationships.*

Perspectives on AI

Discover what some of the world’s most recognized thinkers have to say about AI’s role in shaping our future.

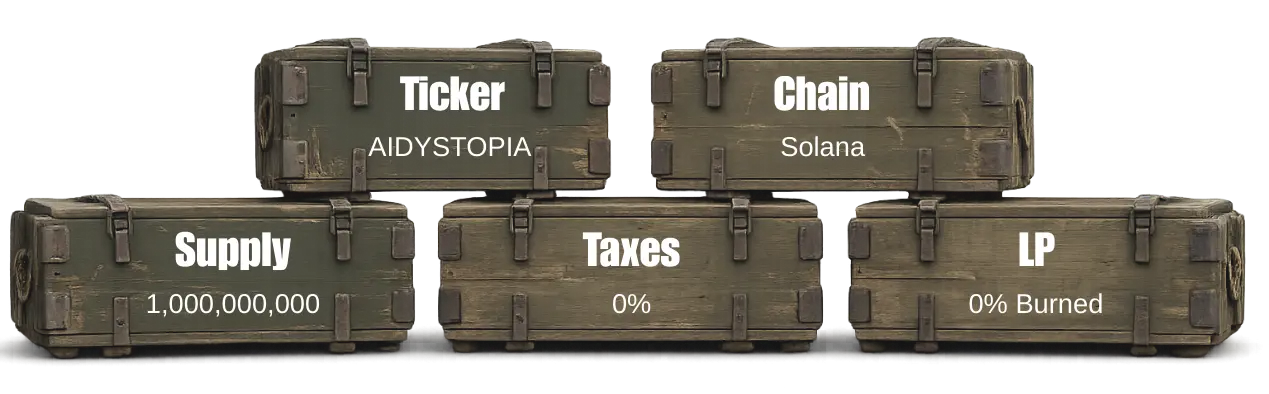

How to Buy AI Dystopia

Tokenomics

9 Major AI Risks

1. Unemployment

The fear that machines will replace human labor is a primary concern. Experts estimate that by 2030, between 75 million and 375 million workers (roughly 3% to 14% of the global workforce) may need to switch occupations and acquire new skills as AI automates traditional roles.

2. Lack of Transparency

AI models are often “black boxes” where the internal logic is hidden, either due to technical complexity or proprietary secrets. This lack of visibility makes it difficult to debug systems or understand why an algorithm produced a specific, potentially flawed, result.

3. Algorithmic Bias

When AI is trained on imbalanced or historical data, it reproduces and amplifies existing societal prejudices. This leads to discriminatory outcomes in hiring, lending, and law enforcement, deepening inequalities based on race, gender, and age.

4. Mass Surveillance

AI can process vast amounts of data to build precise personal profiles, even predicting a person’s future location based on past habits. This constant monitoring erodes the right to privacy, making personal movements and behaviors visible to corporations and governments alike.

5. Disinformation

Deepfakes and bots accelerate the spread of convincing misinformation, making it harder to distinguish reality from fiction. Furthermore, AI-driven recommendation engines trap users in “echo chambers,” reinforcing extremist views by serving content that aligns with existing beliefs.

6. Intellectual Property Theft

Tech giants often train AI models on copyrighted books, art, and music without compensating the original creators. This has led to widespread legal battles, as artists argue their livelihoods are being undermined by companies monetizing “stolen” intellectual property.

7. Weaponization

The development of AI-driven weaponry, such as autonomous drones and robotic reconnaissance dogs, marks a shift toward a new era of warfare. The ultimate fear is the deployment of lethal autonomous weapons systems that can target and kill without direct human intervention.

8. Environmental Impact

The compute power required to train and run large AI models is immense. Data centers consume electricity at rates comparable to small nations and require millions of gallons of water for cooling, contributing significantly to carbon emissions and resource scarcity.

9. Big Tech Monopolies

A handful of companies—including Google, Microsoft, Meta, NVIDIA, OpenAI, Apple, Tesla, Anthropic, and Amazon—dominate the AI landscape, spending upwards of $30 billion annually on R&D and acquisitions. This concentration of power allows a few private entities to dictate the direction of technology and potentially influence democratic governments.

Next Steps

Invest in Ethical AI Research

Support institutions that prioritize safety, transparency, and fairness.

Enact Global AI Governance

Develop international frameworks to manage risk and prevent misuse.

Reform Education Systems

Focus on critical thinking, creativity, and digital literacy.

Encourage Public Discourse

Involve diverse voices in shaping the AI narrative.

Design for Inclusion

Ensure the public are represented in AI design and policy.

Sources

U.S. Bureau of Labor Statistics

World Economic Forum Future of Jobs Report

Pew Research Center

Legal

The content on this site is for informational and entertainment purposes only. Nothing constitutes financial, legal, or investment advice. Digital assets are volatile and involve risk. Do your own research. By using this site you agree to our terms.